75 times faster than hyperscaler GPUs, Cerebras says.

Cerebras got Meta’s Llama 3.1 405B large language model to run at 969 tokens per second, 75 times faster than Amazon Web Services’ fastest AI service with GPUs could muster.

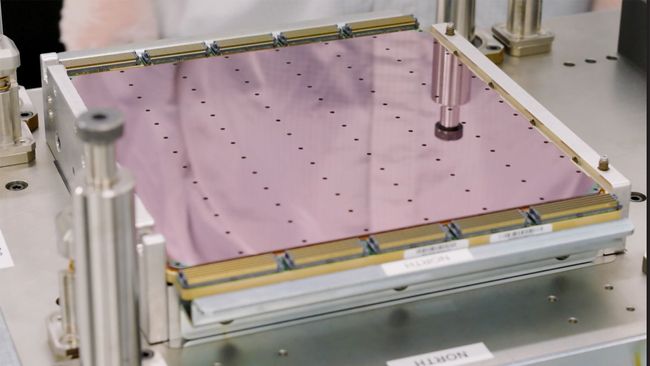

The LLM was run on Cerebras’s cloud AI service Cerebras Inference, which uses the chip company’s third-generation Wafer Scale Engines rather than GPUs from Nvidia or AMD. Cerebras has always claimed its Inference service is the fastest for generating tokens, the individual parts that make up a response from an LLM. When it was first launched in August, Cerebras Inference was claimed to be about 20 times faster than Nvidia GPUs running through cloud providers like Amazon Web Services in Llama 3.1 8B and Llama 3.1 70B.

But since July, Meta has offered Llama 3.1 405B, which has 405 billion parameters, making it a far heavier model than Llama 3.1 70B with 70 billion parameters. Cerebras says its Wafer Scale Engine processors can run this massive LLM at “instant speed,” with a token rate of 969 per second and a time-to-first-token of just 0.24 seconds; that’s a world record according to the company, not just for its chips, but also for the Llama 3.1 405B model.

Compared to Nvidia GPUs rented from AWS, Cerebras Inference was apparently 75 times faster; the Wafer Scale Engine chips were 12 times faster than even the fastest implementation of Nvidia GPUs from Together AI. Its closest competitor, AI processor designer SambaNova, was beaten by Cerebras Inference by 6 times.

To illustrate how fast this is, Cerebras prompted Fireworks (the fastest AI cloud service equipped with GPUs) and Inference to create a chess program in Python. Cerebras Inference took about three seconds, while Fireworks took 20.

“Llama 3.1 405B on Cerebras is by far the fastest frontier model in the world – 12x faster than GPT-4o and 18x faster than Claude 3.5 Sonnet,” Cerebras said. “Thanks to the combination of Meta’s open approach and Cerebras’s breakthrough inference technology, Llama 3.1-405B now runs more than 10 times faster than closed frontier models.”

Even when upping the query size from 1,000 tokens to 100,000 tokens (a prompt that’s made up of at least a couple thousand words), Cerebras Inference apparently operated at 539 tokens per second. Of the five other services that could even run this workload, the best mustered just 49 tokens per second.